The Clinical Design Loop

How clinical innovation actually becomes care

Abstract

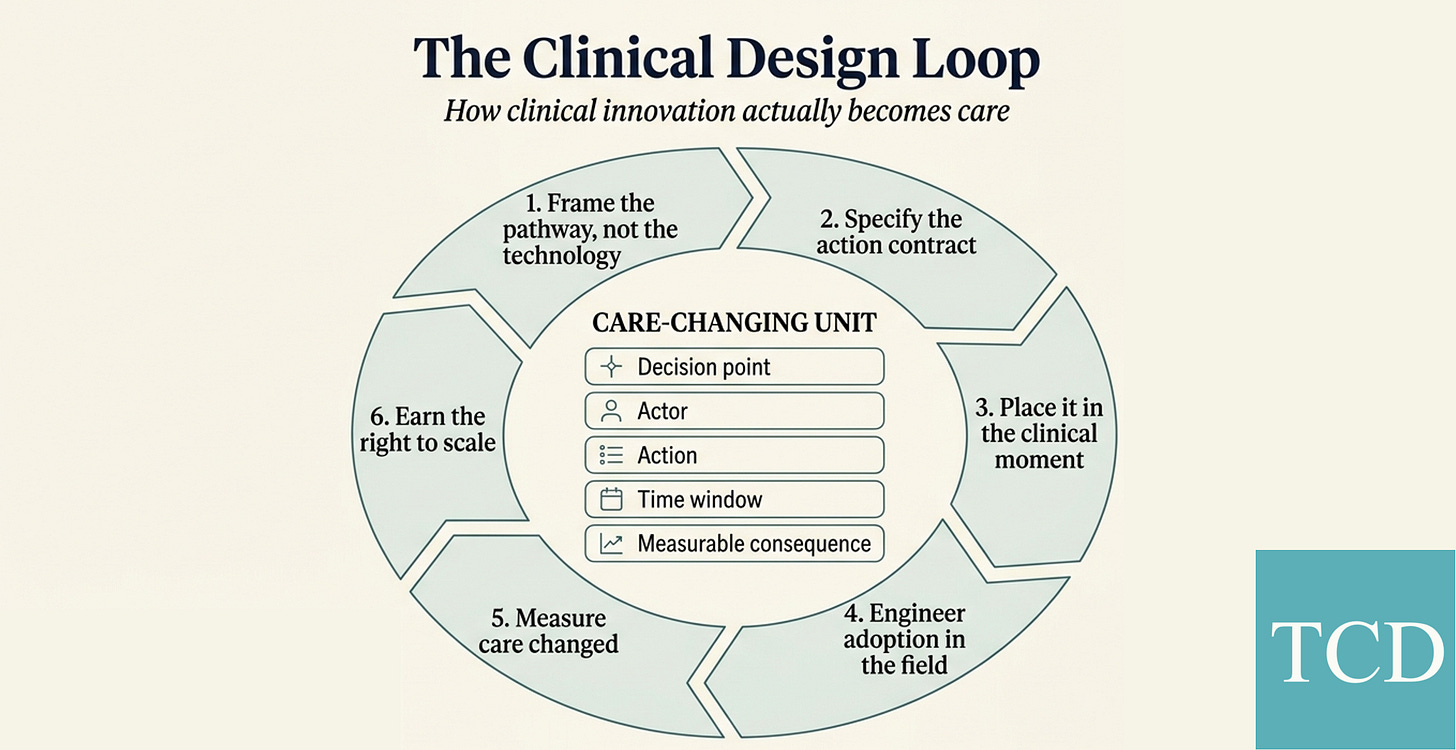

A prediction displayed on a screen is not care. A biomarker measured in a lab is not a treatment decision. The care-changing unit is the decision point, the actor, the action, the time window, and the measurable consequence. This piece introduces the Clinical Design Loop: the operating sequence for making that unit hold under real conditions.

The recurring mistake

In a cluster-randomised trial published recently, a predictive deterioration model was displayed on the screens of cardiology wards across 10,422 inpatient visits. The analytics appeared informative. Clinicians could see who was getting sicker. The trial was rigorous. The primary outcome did not move. The intervention was described, in the paper itself, as “passive display with no specific response mandated.” About 11% of patients were moved between display and non-display beds during the study. 1

Around the same time, another AI was doing something very different in German breast screening. PRAIM enrolled 463,094 women and 119 radiologists. Cancer detection rose from 5.7 to 6.7 per 1,000. Recalls went down, not up (37.4 vs 38.3 per 1,000). A safety net triggered 3,959 times, was accepted 1,077 times, and surfaced 204 cancers that would otherwise have been missed. 2

Two AI systems with credible technical signal. Only one was built as care. The difference wasn’t a better model. It was a designed response.

Healthcare keeps treating technical performance as almost the same thing as clinical impact. It isn’t. Performance sits upstream of care. Between them lies a gap that no model, no biomarker, and no therapy can cross on its own. That gap is where clinical design lives.

The same pattern shows up well beyond AI. ctDNA assays can identify residual disease in stage II colon cancer with remarkable precision. Multi-cancer early detection tests can flag signals from a single tube of blood. Early results from the NHS-Galleri trial, with over 140,000 volunteers, reported that the main aim of cutting stage III-IV cancers was not met “in a statistically definite way.” Elegant detection doesn’t automatically equal population value. 3

What actually changes care

If the unit of analysis isn’t the model, the test, or the therapy, what is it?

The useful primitive is what I’ll call the care-changing unit: a decision point in a pathway, a named actor with the authority to act on it, a concrete action (order, defer, escalate, refer, prescribe, monitor), a time window in which that action has to land, and a measurable consequence downstream. Five things, tied together, or nothing changes.

That framing moves what we look at. PRAIM’s care-changing unit isn’t “AI reads mammograms.” It’s: a suspicious case gets surfaced, a radiologist is prompted to re-review, and a recall decision changes, within the reading session. 4

DYNAMIC’s care-changing unit isn’t “ctDNA can be measured in stage II colon cancer.” It’s: post-operative ctDNA status reclassifies a patient, the oncologist’s decision on adjuvant chemotherapy changes, and longitudinal ctDNA clearance reshapes how residual risk is read over the next several years. With median follow-up of 59.7 months, 5-year RFS was 88% vs 87% and OS 93.8% vs 93.3%, while ctDNA clearance was seen in 35 of 40 treated ctDNA-positive patients. The value of the assay isn’t that it exists, it’s that it rewires who gets adjuvant therapy and how residual risk is read over time. 5

FFR-CT’s care-changing unit isn’t “we can derive physiology from a coronary CT.” It’s: after a CCTA, the decision branch on whether to send a patient for invasive angiography, further non-invasive testing, or medical management shifts, based on the FFR-CT result. 6

Once you start seeing care this way, a lot of familiar debates quietly collapse. Is the model good enough? Is the test sensitive enough? Is the therapy cost-effective? All necessary questions. None of them decide whether care changes.

The Clinical Design Loop

Clinical Design begins when we stop asking whether an innovation works in principle and start asking whether it can hold as a decision-action chain under real conditions.

Six stages. Not a ladder, but a loop: a repeatable operator sequence for turning innovation into care.

1. Frame the pathway, not the technology

Start with the pathway node you want to move. Which decision, in which pathway, for which population? Framing the work around the algorithm, the biomarker, or the therapy almost always produces an innovation looking for a decision to influence. That order rarely survives contact with a busy clinic.

2. Specify the action contract

Who acts, on what signal, with what threshold, in what timeframe, and with what safety boundaries. This is the stage where most innovations quietly die.

ADMINISTER is small but sharp. A digital heart-failure consult intervention enrolled 150 patients. GDMT optimisation was 1.19 vs 0.08. OMT at 12 weeks was 28.2% vs 6.9%. Time-to-OMT hazard ratio was 4.51. Remote consults were 2.0 vs 1.0, physical consults roughly similar (1.2 vs 1.4). The innovation isn’t “digital follow-up.” It’s a designed medication-optimisation micro-pathway with a clear action contract at each step. 7

3. Place it in the clinical moment

The intervention has to land where the decision happens. This is what interoperability really means. Not data exchange in the abstract, but semantic placement at the point of action. An API that delivers a result at 3 a.m. is useless if the decision happens at 8 a.m. somewhere the result never appears. That’s why PRAIM worked through the viewer, not around it: the prompt landed inside the reading session, where the recall decision actually gets made. 8

4. Engineer adoption in the field

Training, rollout, champions, behaviour, learning curves, iteration. Adoption is real work and a genuine constraint. It’s also not the whole Loop. Plenty of innovations get adopted enthusiastically and still fail to change care, because something else in the chain is broken.

5. Measure care changed

Evidence is a run-rate property of the pathway, not a pre-launch event. Measure action, not just display. Measure pathway deltas, not just model AUC. In PRAIM, the evidence wasn’t only higher detection; it was 3,959 safety-net triggers, 1,077 acceptances, and 204 cancers surfaced that would otherwise have been missed. If you can’t show a delta in action, you haven’t yet shown clinical implementation.

6. Earn the right to scale

Scaling isn’t the natural consequence of technical success. It’s earned through financing, budget-owner fit, governance, system capacity, and reimbursement or guideline legitimacy.

The NHS FFR-CT rollout is the cleanest example. Across 90,553 CCTA patients, 7,863 received FFR-CT. Twenty-seven hospitals implemented within 12 months. Median time from funding to go-live was 4.7 months. A national Innovation and Technology Payment, alongside NICE guidance, carried the test into routine commissioning. Fifty-four sites were commissioned by the end of the programme. And yet only 54 of 124 acute trusts (44%) were using it at 3 years. Invasive angiography dropped from 16% to 14.9% (aHR 0.93). Downstream tests fell from 189 to 167 per 1,000 (HR 0.88). Scale, here, was pathway design plus payment plus operational readiness. Enthusiasm was never going to be enough. 9

The same logic applies well beyond digital tools. From ctDNA-guided adjuvant decisions to CAR-T referral pathways, and from ATTR-CM treatment pathways to any new targeted therapy trying to cross into routine practice, breakthrough science only becomes care when someone owns the next action inside a real pathway.

Why it’s a loop, not a ladder

The six stages look linear on the page. In the field they don’t behave that way. When adoption fails, the answer is often not more training but better placement in the clinical moment. When evidence is weak, the problem is often not the endpoint but the action contract upstream of it. When economics fail, the issue isn’t usually price alone, it’s the pathway node you chose to redesign in the first place.

That’s the diagnostic value of the Loop. Each stage sends you back to a specific earlier stage when it breaks. A ladder lets you fall. A loop tells you where.

Where this sits next to implementation science

Implementation science already gives us a great deal. RE-AIM, PRISM, CFIR 2.0, NASSS, NPT, SEIPS, Learning Health Systems: each one sharpens a different aspect of how change happens inside real systems. The Clinical Design Loop isn’t a rejection of any of that. 101112 Implementation science gives us diagnostic depth. Clinical Design adds operator sequence.

AEIOU is the scorecard, not the sequence

If you’ve read the Clinical Design Framework, you already know AEIOU: Adoption, Evidence, Interoperability, Ownership, Unit Economics. It’s easy to confuse a scorecard with a method.

AEIOU tells you what must be true. The Loop tells you in what order to make it true.

The vowels are the five constraints any real clinical innovation must eventually satisfy. The Loop is the transversal operating method, cutting across all five.

A light mapping, to be used loosely. Ownership is most visible when you specify the action contract. Interoperability and Adoption show up in clinical-moment placement and in field engineering. Evidence lives inside measuring care changed. Unit Economics becomes unavoidable when you try to earn the right to scale. Light on purpose. Forcing one Loop stage onto one vowel would weaken the architecture.

What to measure if you want to know whether care changed

A displayed tool is not an implemented intervention. The distance between “tool is live” and “care has changed” is where most innovation stories go quiet.

A useful operator metric bank lives on a few axes. Time, first: time to review, time to action, time to treatment. Did the decision happen faster, and did the action land inside its clinical window? Then signal-to-response conversion: of all the signals the tool produced (alerts, flags, recommendations, safety-net triggers), what fraction led to a specified action, and in which direction?

After that, downstream utilisation. Did the pathway deltas show up where they should (recalls, biopsies, invasive angiography, adjuvant chemotherapy, hospital admissions), and not somewhere they shouldn’t? Workflow telemetry sits underneath all of this: EHR use metadata, event logs, clickstream. It tells you whether the tool is actually being engaged with, by whom, at which points in the shift, and at what cognitive cost. The FDA and others are building post-market monitoring frameworks for AI-enabled devices around exactly this kind of signal. 131415

Finally, variance. Site variability, drift, learning curves. What looks like one intervention across a network is usually several. Measuring variance across sites, across months, across clinician groups, tells you whether the care change is durable or a local hero effect.

Two lines worth carrying into every review.

Measure care changed, not tool displayed.

Prediction without a designed response is information. Prediction inside a specified workflow is care.

Clinical Design as a category

Healthcare doesn’t suffer from a lack of innovation. The pipeline is full. The clinical journals are full. The pilots are full. What’s scarce is the operator discipline that turns any of this into pathway-native action.

Adoption is necessary and insufficient. Interoperability, done well, is semantic placement at the decision point. Ownership isn’t an org-chart detail, it’s a safety variable. Evidence is a run-rate property, not a launch hurdle. Scale must be earned.

Clinical Design is the discipline that binds these together. It’s the operator layer: the thing a hospital operator, a pharma executive, or a policymaker can use on a Monday morning to interrogate a specific innovation and ask whether it’s on a credible path to becoming care.

The future won’t belong to the organisations with the most models, the most biomarkers, or the most pilots. It will belong to those that can turn innovation into durable routines of care. That is what Clinical Design is for.

AEIOU are the five constraints. The Clinical Design Loop is how you survive them.

Note & disclaimers

Context: The Clinical Decade (and this article) explore the theoretical foundations of Clinical Design, a teaching framework created by Marcos Gallego Llorente. It has been developed through independent research and academic activities, and is shared here as a personal contribution to the field.

Independence: Views and materials published in The Clinical Decade are personal/independent and do not represent any employer, client, or institution.

License: Licensed under Creative Commons Attribution–NonCommercial–NoDerivatives 4.0 International (CC BY-NC-ND 4.0), unless otherwise stated.

Where do you see most clinical innovations fail today: action contract, clinical placement, or scale economics? Comment here!