Before You Run the Pilot, Run the Loop

An operator manual for turning clinical innovation into care.

Executive Summary (TL;DR)

The Problem: Most clinical innovation reviews start in the wrong place. They grade the model, the biomarker, the device or the dashboard. They rarely grade the action that has to change inside the pathway.

The Object: The unit of analysis is the care-changing unit, a single sentence with six slots: population, decision point, named actor, action, time window, and measurable consequence. If you can’t write the sentence, you don’t yet have an implementable clinical innovation; you’ll have a technical asset in search of a pathway.

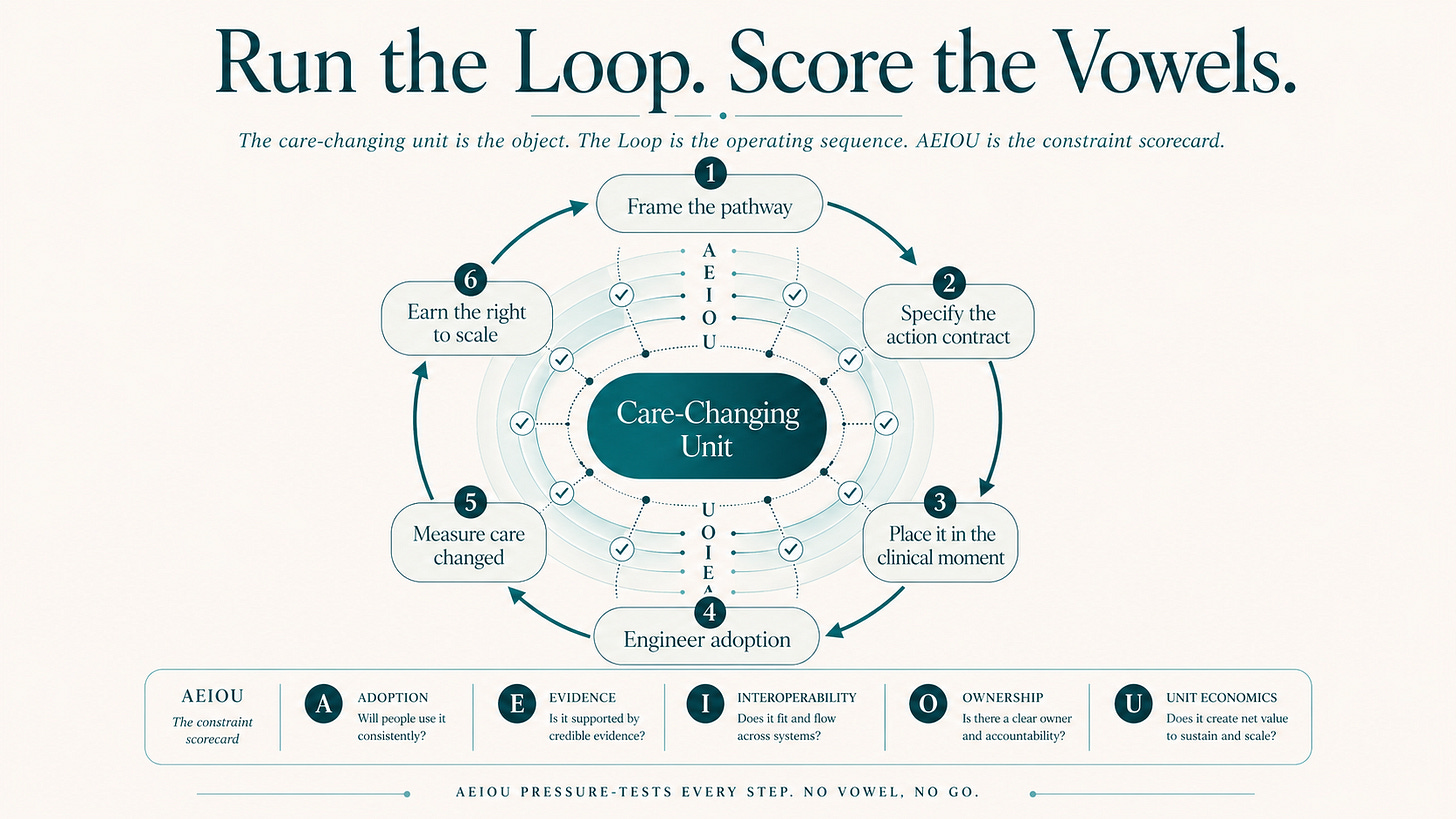

The Sequence: The Clinical Design Loop is the operator method: Six stages, run before the pilot, scored at every stage. These are framing the pathway, specifying the action contract, placing it in the clinical moment, engineering adoption in the field, measuring care changed, and earning the right to scale.

The Scorecard: The Vowels of Clinical Design AEIOU (Adoption, Evidence, Interoperability, Ownership, Unit Economics) sit inside each stage as a pressure test. AEIOU names what must be true. The Loop tells you in what order to make it true.

The Diagnostic: The Loop is a failure localisation tool. Most failed innovations don’t fail everywhere. They fail at one link in the decision-action chain that nobody designed.

The Discipline: A care-change dashboard with 8 metrics replaces the standard adoption deck (logins, sites live, alerts fired). Action delta, action latency, signal-to-action conversion, pathway delta, variance and durability are the metrics that matter.

The Operator Rule: Run the Loop. Score the vowels. Redesign where the chain breaks. Then ask whether the action changed.

1. The Steering Committee Scenario

A hospital innovation committee is reviewing a promising AI tool. The model performs well in retrospective tests. The vendor has a flagship pilot site. The clinical team is interested. The slide deck name-checks adoption, evidence and ROI. The CMO is leaning toward yes.

Nobody in the room has yet answered the only question that matters: what exactly will change in care?

Variations of this scene are happening this quarter inside hospital systems, payer organisations, biotech commercial teams and venture investment committees. The technology in front of the committee is increasingly good. The review discipline is mostly the same as it was in 2018.

The object of a Clinical Design review is the care-changing unit, sitting one layer underneath the technology. Before reviewing any innovation, the committee should be able to fill in a single sentence:

In [population], at [decision point], [actor] will take [action] within [time window], producing [measurable consequence].

If the sentence cannot be written, what’s on the agenda is a technical asset in search of a pathway, with the clinical innovation review still to come.

That’s where the Loop begins

.

2. The Architecture: Object, Sequence, Scorecard

The previous edition of The Clinical Decade introduced the Clinical Design Loop as the operating sequence for moving from innovation to care. This piece converts the Loop from concept into operator tool, and shows how AEIOU sits inside it.

The architecture is short enough to say out loud:

The care-changing unit is the object.

The Clinical Design Loop is the operating sequence.

AEIOU is the constraint scorecard.

Or, in operator language:

Run the Loop. Score the vowels. Redesign where the chain breaks.

AEIOU names the five constraints any clinical innovation must survive at run-rate:

· Adoption. Will humans use it inside the clinical moment?

· Evidence. Can value be proven in the wild, not only in a study?

· Interoperability. Does data land with meaning where the decision happens?

· Ownership. Who owns the next action, the risk and the governance?

· Unit Economics. Who pays, who benefits, and can the model scale sustainably?

The Loop tells you where you are in the design process. AEIOU tells you what kind of failure you’re carrying.

Avoid the common temptation to pin one Loop stage to one vowel. Ownership is most visible in the action contract, and again in scale governance. Evidence is loudest at measurement, and yet depends on whether the pathway denominator was framed correctly upstream. Interoperability looks technical, and reduces to semantic placement once the signal has to land in the decision window. Adoption isn’t training. Unit Economics isn’t pricing.

Each Loop stage carries a different AEIOU pressure. AEIOU sits inside the Loop, not beside it.

A Loop stage with a key vowel in red doesn’t get a green light to scale, no matter how good the slide deck looks.

3. The Care-Changing Unit: Where Impact Starts

A care-changing unit has six slots:

1. a population the unit applies to

2. a decision point in a pathway

3. a named actor with the authority to act

4. a concrete action

5. a clinically meaningful time window

6. a measurable consequence

The core impact chain is short:

decision → actor → action → window → consequence

The population defines where that impact chain applies. No chain, no implementation.

The cleanest way to feel the discipline is a head-to-head between two AI deployments that sound similar and behave very differently.

PRAIM versus CoMET: designed action versus passive display

The careless framing of PRAIM is “AI reads mammograms”. The care-changing unit is closer to this:

In population-based mammography screening, during the radiologist reading session, an AI safety net prompts re-review of cases initially judged unsuspicious, allowing the radiologist to change the recall decision before reporting is final, increasing cancer detection without increasing recall.

The numbers behind that sentence give it weight. PRAIM was deployed across German population-based mammography screening, with 463,094 women screened and 119 radiologists involved. Cancer detection rose from 5.7 to 6.7 per 1,000 (a 17.6% relative increase in adjusted detection). The recall rate went down. The safety-net function triggered 3,959 times, was accepted 1,077 times, and surfaced 204 cancers that would otherwise have been missed.1

The careless framing of CoMET is “AI deterioration alerts on the wards”. The care-changing unit needs all six slots:

In an inpatient ward, when a patient’s deterioration risk crosses a defined threshold, the responsible nurse or physician escalates assessment within a specified time window, triggering a predefined review, diagnostic or treatment action, reducing deterioration events or time-to-rescue.

CoMET, a pragmatic cluster-randomised trial across 10,422 inpatient visits, evaluated a passive display of AI-based deterioration risk trajectories. The intervention had education, implementation planning and rigorous methodology. It did not include a mandated response. The primary outcome did not move. The authors themselves emphasised the next design step: clinician interpretation, care processes, and communication practices. 2

PRAIM and CoMET ran on credible models. Only one had a designed care-changing unit. The difference was the action contract, not the algorithm.

A short methodological note for the careful reader. PRAIM and CoMET sit in different evidence categories: PRAIM is a prospective real-world implementation study in population-based screening; CoMET is a pragmatic cluster-randomised trial of passive display. They are deliberately being compared on one axis only: one design tied the signal to a clinical decision before the window closed; the other tested visibility without a mandated response.

Mini-box: this applies to biomarkers too

Clinical Design isn’t only about digital tools. The same primitive applies to a molecular signal trying to change a treatment decision.

The careless framing of ctDNA in stage II colon cancer is “ctDNA can be measured”. The care-changing unit ties molecular signal to a treatment decision: after surgery, postoperative ctDNA reclassifies recurrence risk, the oncologist’s adjuvant chemotherapy decision changes within the treatment window, unnecessary chemotherapy goes down, recurrence-free survival is preserved.

The DYNAMIC 5-year outcomes (median follow-up 59.7 months) showed 5-year recurrence-free survival of 88% with ctDNA-guided management versus 87% with standard management, while cutting the proportion of patients receiving adjuvant chemotherapy in the ctDNA-guided arm. 3The biomarker rewires the decision; its value lives in that rewiring, not in the existence of a measurable signal.

4. The Clinical Design Loop: Operator Manual

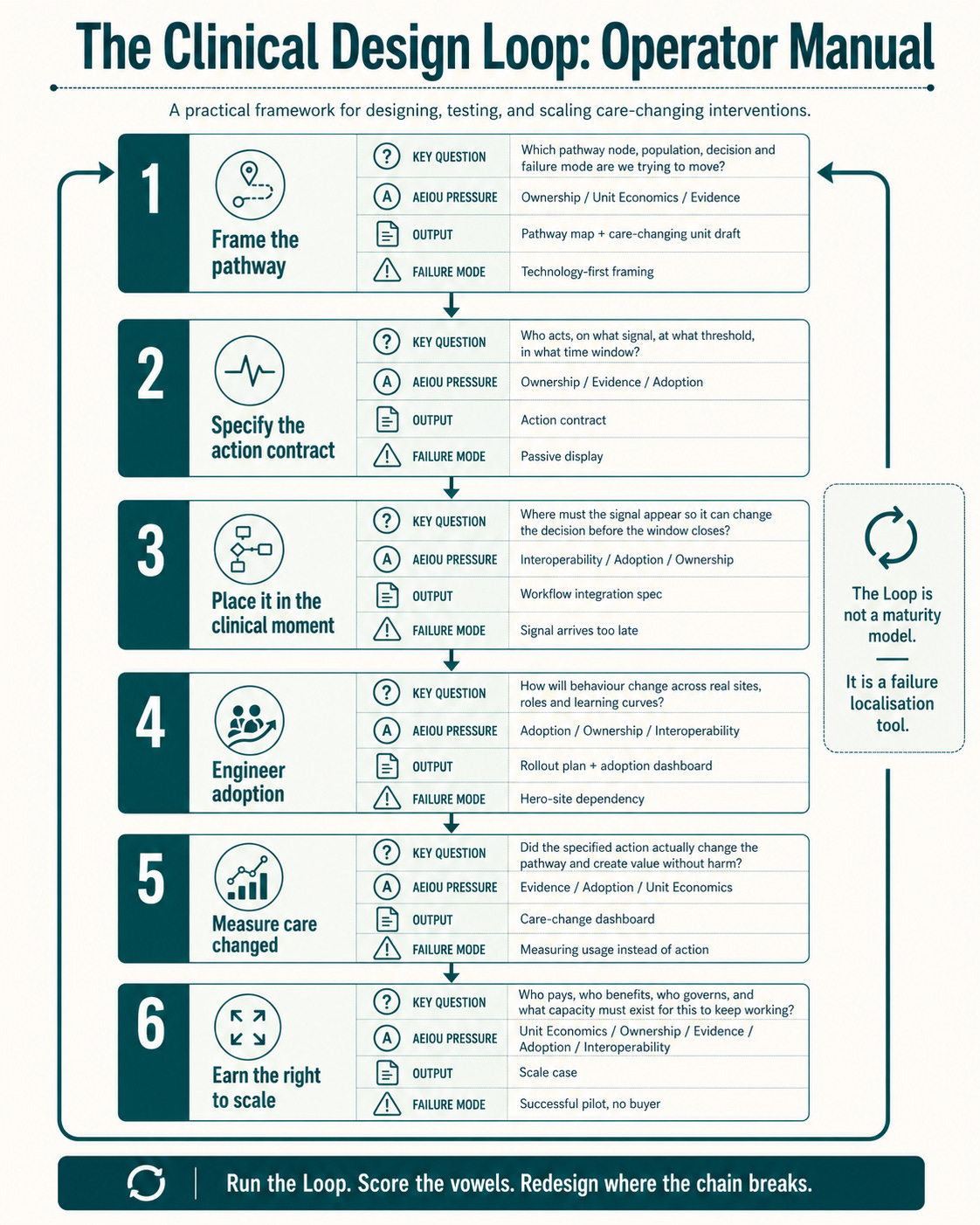

This Loop is designed to be used as a review discipline before funding a pilot, scaling a digital tool, launching a biomarker programme, redesigning a pathway, deploying an AI model, or backing a healthtech company.

What follows are six operator cards: Each card is one stage. Each card holds a steering-committee question, the AEIOU pressure that lives there, the owner who must be named, the output that has to leave the room, the KPI that proves the work, and the failure mode that kills it.

Stage 1. Frame the pathway, not the technology

Question: Which pathway node, population, decision and failure mode are we trying to move?

AEIOU pressure: Ownership / Unit Economics / Evidence

Owner: Pathway owner + strategy or product lead

Output: One-page pathway map; baseline decision-action chain; draft care-changing unit

KPI: Eligible population defined; baseline measured; decision point named; budget owner identified

Failure mode:Technology-first framing; wrong denominator; vague “improve care” claim; no budget owner

Stage 2. Specify the action contract

Question: Who acts, on what signal, at what threshold, within what time window, with what safety boundaries?

AEIOU pressure: Ownership / Evidence / Adoption

Owner: Named clinical owner: MDT lead, GP partner, radiology lead, specialist nurse, ward physician

Output: Action contract; escalation protocol; override rules; safety boundaries

KPI: Signal-to-action rate; action latency; override rate; escalation completion

Failure mode:Passive display; ambiguous threshold; no accountable actor; signal generates anxiety, not action

A short, worked example: The ADMINISTER trial bundled structured therapy data, vital signs and guideline-directed medical therapy (GDMT) recommendations into the clinician’s workflow before HFrEF outpatient consults. Over 12 weeks, GDMT optimisation improved versus usual care, without an increased burden on patient-reported time or quality of life. 4 The care-changing unit reads as: “before each consult, the clinician receives structured therapy, vital sign and guideline recommendation data, then adjusts GDMT within a 12-week optimisation window, improving GDMT score and increasing the probability of reaching optimal medical therapy.” The technology mattered. The intervention was the medication-optimisation micro-pathway with an explicit actor, signal, threshold and window.

Stage 3. Place it in the clinical moment

Question: Where must the signal appear so that it can change the decision before the window closes?

AEIOU pressure: Interoperability / Adoption / Ownership

Owner: Clinical operations + EHR/viewer/informatics owner + frontline user

Output: Workflow integration spec; screen and prompt placement; data handoff map

KPI: % signals reviewed before deadline; added clicks or time; failed handoffs; cognitive-load proxy

Failure mode: Separate portal; result appears too late; API without semantic placement; alert fatigue

Interoperability stops being a technical concept here. It becomes semantic placement. An API that delivers a result at 3 a.m. is useless if the decision happens at 8 a.m. somewhere the result never appears.

Stage 4. Engineer adoption in the field

Question: How will behaviour change across real sites, shifts, roles and learning curves?

AEIOU pressure: Adoption / Ownership / Interoperability

Owner: Implementation lead + site champions + operations

Output: Rollout plan; training model; champion network; feedback cadence; adoption dashboard

KPI: Eligible-case penetration; active user rate; use per eligible patient; site variance; drop-off over time

Failure mode: Hero-site dependency; one-off training; adoption treated as comms; low-use sites hidden by averages

Average adoption hides local failure. Variance is the metric that tells the truth.

Stage 5. Measure care changed

Question: Did the specified action actually change the pathway, and did that change create value without harm?

AEIOU pressure: Evidence / Adoption / Unit Economics

Owner: Analytics or RWE lead + clinical owner + medical and safety governance

Output: Measurement plan; event log; care-change dashboard; evidence package

KPI: Action delta; time-to-action; downstream utilisation; safety signals; outcome proxy; variance

Failure mode: Measuring logins, displays or AUC instead of actions; no baseline; endpoints too distant; no comparator

This is also where regulation is pointing. The FDA’s 2025 PCCP guidance for AI-enabled device software functions supports iterative improvement through predefined modifications, validation methods and impact assessment. 5 Stage 5 of the Loop builds for that reality: evidence becomes a lifecycle property, not a launch event.

Stage 6. Earn the right to scale

Question: Who pays, who benefits, who governs, and what capacity must exist for this to keep working?

AEIOU pressure: Unit Economics / Ownership / Evidence / Adoption / Interoperability

Owner: Executive sponsor + finance, access, payer owner + pathway governance

Output: Scale case; budget-impact model; capacity plan; reimbursement and guideline roadmap; operating model

KPI: Cost per actionable case; budget-owner ROI; time-to-go-live; sites live; durability at 6 to 12 months

Failure mode: Successful pilot with no buyer; benefits outside paying budget; downstream capacity bottleneck; governance gap

A short worked example. The NHS national FFR-CT implementation rolled out across 27 hospitals in 12 months. The programme included 90,553 CCTA patients and 7,863 FFR-CT patients. Median time from funding to programme go-live was 4.7 months. Invasive coronary angiography fell from 16.0% to 14.9%. Downstream non-invasive cardiac testing fell from 189 to 167 per 1,000. By the end of the programme, 54 sites were commissioned.6 That short funding-to-go-live time is the operator signal. Financing (Innovation and Technology Payment, plus NICE guidance), guideline placement, commissioning, operational readiness and pathway integration had been designed as part of the programme, not left as an afterthought. 7 Scale was assembled out of payment, commissioning, guideline placement, capacity, workflow integration and outcome monitoring. It was earned, not waited for.

5. How to Read the AEIOU Pressure

The AEIOU pressure on each card is the operational core of the Loop. It tells you which kind of risk you’re reducing at each stage, and which one you’re ignoring.

At Stage 1, Ownership and Unit Economics already matter, because the pathway node decides who has authority, who pays and who benefits. Frame the wrong node, and the economics fail even if the technology works.

At Stage 2, Ownership turns clinical. The question stops being “who likes the innovation?” and becomes “who is accountable for the next action, by name?”

At Stage 3, Interoperability becomes semantic placement, not data exchange in the abstract.

At Stage 4, Adoption becomes field behaviour. Training is one part. Real adoption varies by site, role, shift, experience, staffing, incentives and fatigue.

At Stage 5, Evidence becomes a run-rate property of the pathway. The question shifts from “can the technology work?” to “did the action change, and did the pathway improve?”

At Stage 6, every vowel returns. Scale is where local success collides with financing, governance, reimbursement, workforce capacity, guideline legitimacy and operational variance.

That’s why AEIOU sits inside the Loop. At every stage, the operator question is the same:

Which vowel are we de-risking? Which vowel are we ignoring?

6. The Loop Is Not a Maturity Model

A maturity model tells you how advanced something looks. The Loop tells you where it will break.

That distinction matters. Most failed innovations don’t fail because every part is wrong. They fail because one link in the decision-action chain was never designed.

A model can be accurate, and still fail at the action contract because the threshold is ambiguous.

A tool can be integrated, and still fail at clinical-moment placement because the signal arrives after the decision.

A pilot can be adopted at a hero site, and still fail at field adoption because variance is invisible across the network.

A biomarker can predict risk, and still fail at evidence because no one changes the treatment.

A product can demonstrate value, and still fail at unit economics because the value accrues to a different budget than the one paying.

The Loop is a failure localisation tool. Used well, it tells you which link broke, which vowel failed, and which stage to redesign.

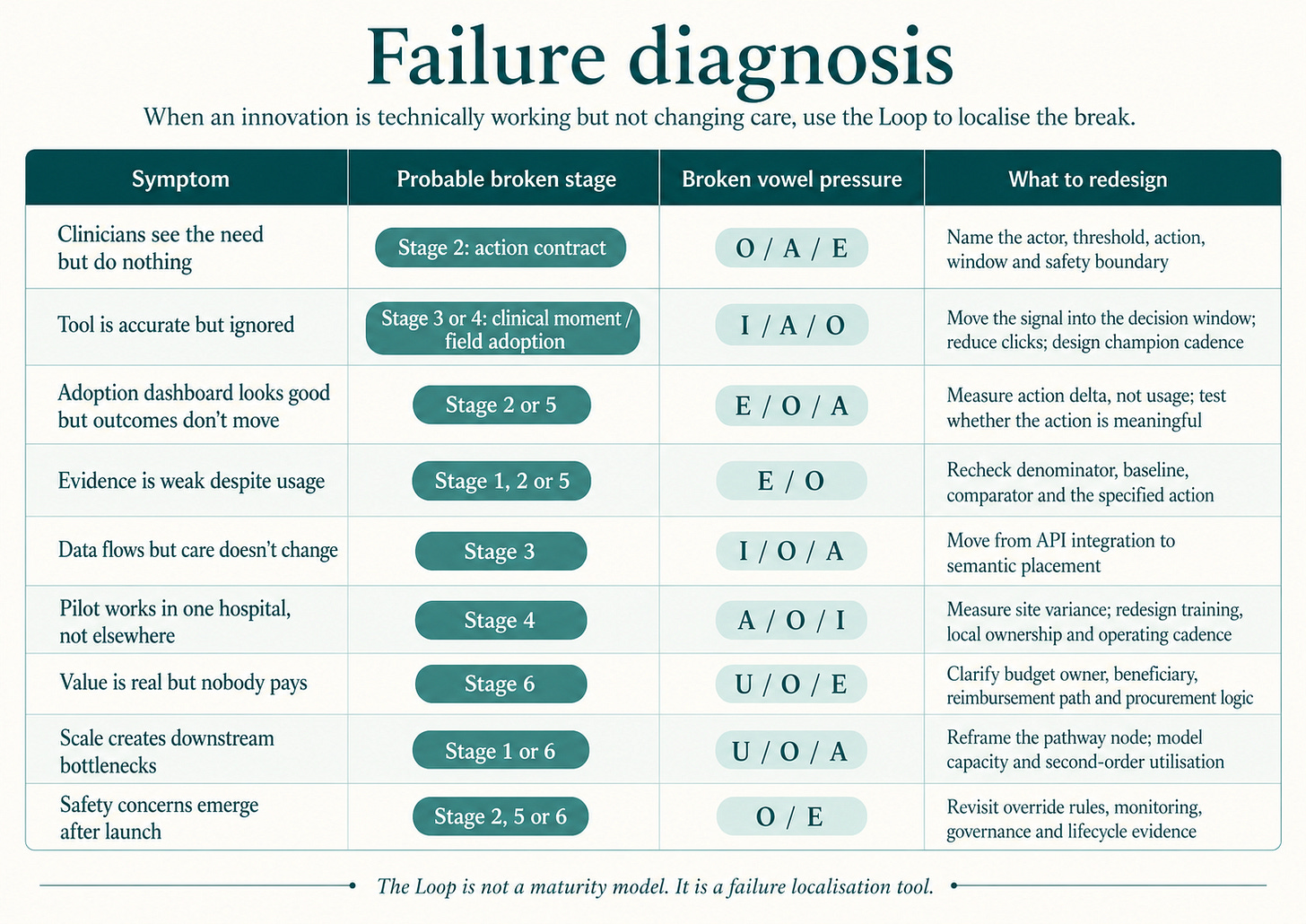

7. Failure Diagnosis Table

Use this table when an innovation is technically “working” but isn’t changing care. Match the symptom, locate the broken stage, identify the vowel under pressure, redesign that link.

Most steering committees recognise three or four rows from the last 12 months of their own pilot portfolios.

8. The Minimum Viable Care-Change Dashboard

Most innovation dashboards measure what’s easy: logins, users, alerts, reports, sites live, patients screened. All useful, none of them sufficient on their own.

A minimum viable care-change dashboard rests on eight metrics.

1. Eligibility denominator. How many patients, cases or decisions should have been touched? Without a denominator, adoption is theatre.

2. Signal generation. How many alerts, flags, recommendations, test results or risk categories were produced? This is the volume of possible intervention.

3. Signal review. What fraction of signals were seen by the right actor before the decision window closed? A signal reviewed too late is often the same as a signal never reviewed.

4. Signal-to-action conversion. What fraction led to the specified action, deferral, escalation, override or documented non-action? This is the core care-change metric.

5. Action latency. How long from signal to action? In many pathways, timing functions as the intervention itself, not as a process metric attached to one.

6. Pathway delta. What changed downstream? Recalls, biopsies, referrals, angiograms, prescriptions, admissions, MDT discussions, treatment starts, avoided tests, avoided visits, earlier escalation.

7. Safety and burden. What unintended harms, false positives, extra workload, inequities or capacity constraints appeared? A clinical innovation that creates unmeasured burden hasn’t been designed.

8. Variance and durability. How does performance vary by site, clinician, month, patient subgroup, shift or learning curve? Average adoption hides local failure.

The metric that organises the other seven is the action delta. A tool can be live, integrated, used, paid for, and clinically endorsed. If the action didn’t change, care didn’t change.

9. Where This Sits Next to Implementation Science

Clinical Design does not replace implementation science. It changes the starting point.

RE-AIM, PRISM, CFIR (in its 2022 update) and NASSS already give the field deep diagnostic depth across reach, effectiveness, adoption, implementation, maintenance, contextual barriers, equity, non-adoption, abandonment, scale-up, spread and sustainability.8910 These frameworks tell you which factors explain why something stuck or didn’t.

Clinical Design adds a different layer: operator sequence. It tells you, beyond which factors matter, in what order to design the decision-action chain so that an innovation becomes care. That sequence is the Loop. AEIOU is the scorecard the Loop runs at every stage. Implementation science gives diagnostic depth. Clinical Design adds operator sequence. They sit together comfortably.

10. The Operator Rule: Better Questions

The Loop changes the review conversation. Instead of the questions an investment committee usually asks, swap each one for its operator equivalent.

Instead of “is the tool validated?”, ask: what action will change, by whom, when, with what consequence?

Instead of “is it integrated?”, ask: does the signal land inside the clinical moment before the window closes?

Instead of “are users adopting it?”, ask: are eligible patients receiving the intended action?

Instead of “does the pilot show value?”, ask: does the budget owner who pays also capture enough of the benefit to sustain it?

Instead of “can we scale this?”, ask: which vowel will break first when this leaves the hero site?

Five swapped questions. A different review meeting.

The One-Page Clinical Design Review

Use this before approving a pilot:

· Write the care-changing unit in one sentence (six slots).

· Run the six Loop stages with named owners and outputs.

· Apply the AEIOU RAG score at every stage.

· Identify the weakest vowel and the broken link in the chain.

· Decide what gets redesigned before the pilot is funded.

11. Closing

A clinical innovation doesn’t become real when the model is accurate, the test is available, the device is approved or the dashboard goes live.

It becomes real when a care-changing unit survives all five vowels.

The Loop is how you test that. AEIOU names the constraints. The Loop sequences the work. The operator manual converts both into a review discipline you can use on a Tuesday morning.

Don’t ask whether the tool is live. Ask whether the action changed. Then ask which vowel failed. Then run the Loop again.

— Marcos

Note & Disclaimers

Context: The Clinical Decade (and this article) explores the theoretical foundations of Clinical Design, a teaching framework created by Marcos Gallego. It has been developed through independent research and academic activities, and is shared here as a personal contribution to the field.

Independence: Views and materials published in The Clinical Decade are personal and independent and don’t represent any employer, client, or institution.

License: Licensed under Creative Commons Attribution–NonCommercial–NoDerivatives 4.0 International (CC BY-NC-ND 4.0), unless otherwise stated.

Eisemann N, Bunk S, Mukama T, et al. Nationwide real-world implementation of AI for cancer detection in population-based mammography screening. Nature Medicine 31, 917–924 (2025). https://www.nature.com/articles/s41591-024-03408-6

Keim-Malpass J, et al. A randomized controlled trial of artificial intelligence-based analytics for clinical deterioration. Scientific Reports, 2026. https://www.nature.com/articles/s41598-026-39051-z

Tie J, et al. Circulating tumor DNA analysis guiding adjuvant therapy in stage II colon cancer: 5-year outcomes of the randomized DYNAMIC trial. Nature Medicine, 2025. https://www.nature.com/articles/s41591-025-03579-w

Man JP, et al. Digital consults in heart failure care: a randomized controlled trial. Nature Medicine, 2024. https://www.nature.com/articles/s41591-024-03238-6

FDA. Marketing Submission Recommendations for a Predetermined Change Control Plan for Artificial Intelligence-Enabled Device Software Functions, 2025. https://www.fda.gov/regulatory-information/search-fda-guidance-documents/marketing-submission-recommendations-predetermined-change-control-plan-artificial-intelligence

Fairbairn TA, et al. Implementation of a national AI technology program on cardiovascular outcomes and the health system. Nature Medicine, 2025. https://www.nature.com/articles/s41591-025-03620-y

https://www.nice.org.uk/guidance/htg429/chapter/4-NHS-considerations

RE-AIM / PRISM. https://re-aim.org/

Damschroder LJ, et al. The updated Consolidated Framework for Implementation Research based on user feedback. Implementation Science, 2022. https://link.springer.com/article/10.1186/s13012-022-01245-0

Greenhalgh T, et al. Beyond Adoption: A New Framework for Theorizing and Evaluating Nonadoption, Abandonment, and Challenges to the Scale-Up, Spread, and Sustainability of Health and Care Technologies. Journal of Medical Internet Research, 2017. https://www.jmir.org/2017/11/e367